How Novice Users Adopt a Voice Assistant

TL;DR

I designed and conducted a mixed-methods study tracking 16 novice users over one week to understand how first impressions of a voice assistant form, what drives attitude change, and why most participants reversed their intention to adopt.

The study revealed that poor discoverability, ambiguous error feedback, and expectation mismatches driven by marketing were the primary causes of trust erosion — findings that remain directly relevant to the design of any AI product that depends on user adoption.

When Amazon launched the Echo in 2014, smart speakers were a genuinely new kind of product — not a screen, not a phone, not a computer. Something you talked to. By 2019, adoption was growing fast. But alongside the growth, I kept noticing something: many users picked up a smart speaker, tried it for a few days, and quietly stopped. The device ended up on a shelf.

Very little research explained why. Most studies focused on experienced users, leaving a gap around what actually happened at the beginning — what novice users expected, how they behaved in their very first interactions, and what determined whether they stayed or gave up.

This study set out to answer that question, specifically for German-speaking novice users — a population that had received almost no research attention despite being a primary market for smart speaker manufacturers.

The research was conducted as part of my MSc in Usability Engineering at Hochschule Rhein-Waal, in collaboration with my supervisors Karsten Nebe and Tobias Limbach.

Research Questions

Three questions structured the study:

- Q1. Do novice users’ perceptions and attitudes towards Amazon Alexa change as they use it — and how?

- Q2. What causes the change?

- Q3. How do first-time users interact with Amazon Alexa?

Study Design

The study used a mixed-methods approach, combining quantitative measurement of user experience with qualitative exploration of attitudes, behavior, and first interactions.

Participants. Sixteen individuals with no prior experience with smart speakers took part — eight male, eight female, aged 23 to 50. Fourteen were native German speakers; two had highly competent German proficiency. All interactions were conducted in German.

Introductory interview. Each participant began with a 30-minute interview covering their knowledge of Alexa, mental models, expectations, and attitudes. Immediately after, participants set up an Amazon Echo Dot and explored it freely for approximately 15 minutes — without guidance. This unguided exploration captured natural first interactions rather than goal-driven ones. Participants completed the User Experience Questionnaire (UEQ) at the end of this session.

One-week private use. Each participant took an Amazon Echo Dot home for seven days, using it however they liked with one condition: all interactions in German. Each day, participants submitted a brief diary report noting their most memorable interactions and frequency of use.

Retrospective interview. After one week, participants returned for a final interview reviewing impressions and overall attitudes, and completed the UEQ again. All interviews were audio-recorded. Quantitative data was analyzed using the UEQ evaluation tool; qualitative data was analyzed thematically.

What Users Expected Before Use

High adoption intent. Before using Alexa, 81% of participants said they would like to adopt a smart speaker. They described it primarily as a multi-functional personal assistant comparable to a smartphone — helpful, efficient, and capable across a wide range of tasks.

Data privacy concerns. Even before using the device, four participants raised concerns about data privacy — worrying about constant listening and data transfer to the cloud. Despite the concerns, all were willing to proceed.

Personality expectations. Nearly all participants said they would not expect human-like conversation. However, several anticipated that Alexa would learn about them over time and become more personalized — an expectation that would later contribute to disappointment.

How First-Time Users Actually Interacted

What people asked first. Most participants began by asking about the weather, playing music, or asking use-related questions — trying to understand what Alexa could actually do. Strikingly, many asked use-related questions directly to Alexa rather than checking the app, expecting the assistant itself to explain how to use it.

Language adaptation. All participants had to rework how they spoke. Alexa was intolerant of even two-second pauses mid-sentence. Users quickly learned to talk faster, reduce words, and simplify sentence structure.

Ambiguous feedback. Five participants expressed confusion about Alexa’s non-verbal feedback — a short sound tone or a fading light ring. When a request failed, users could not tell whether the problem was with voice recognition, with the request itself, or with a missing skill.

Accidental subscriptions. Four participants accidentally started service subscriptions during use — in one case, an Amazon Prime subscription triggered by a single “Yes” response to a confirmation prompt. This had an outsized effect on trust, transforming a minor frustration into a genuine safety concern.

How Attitudes Changed After One Week

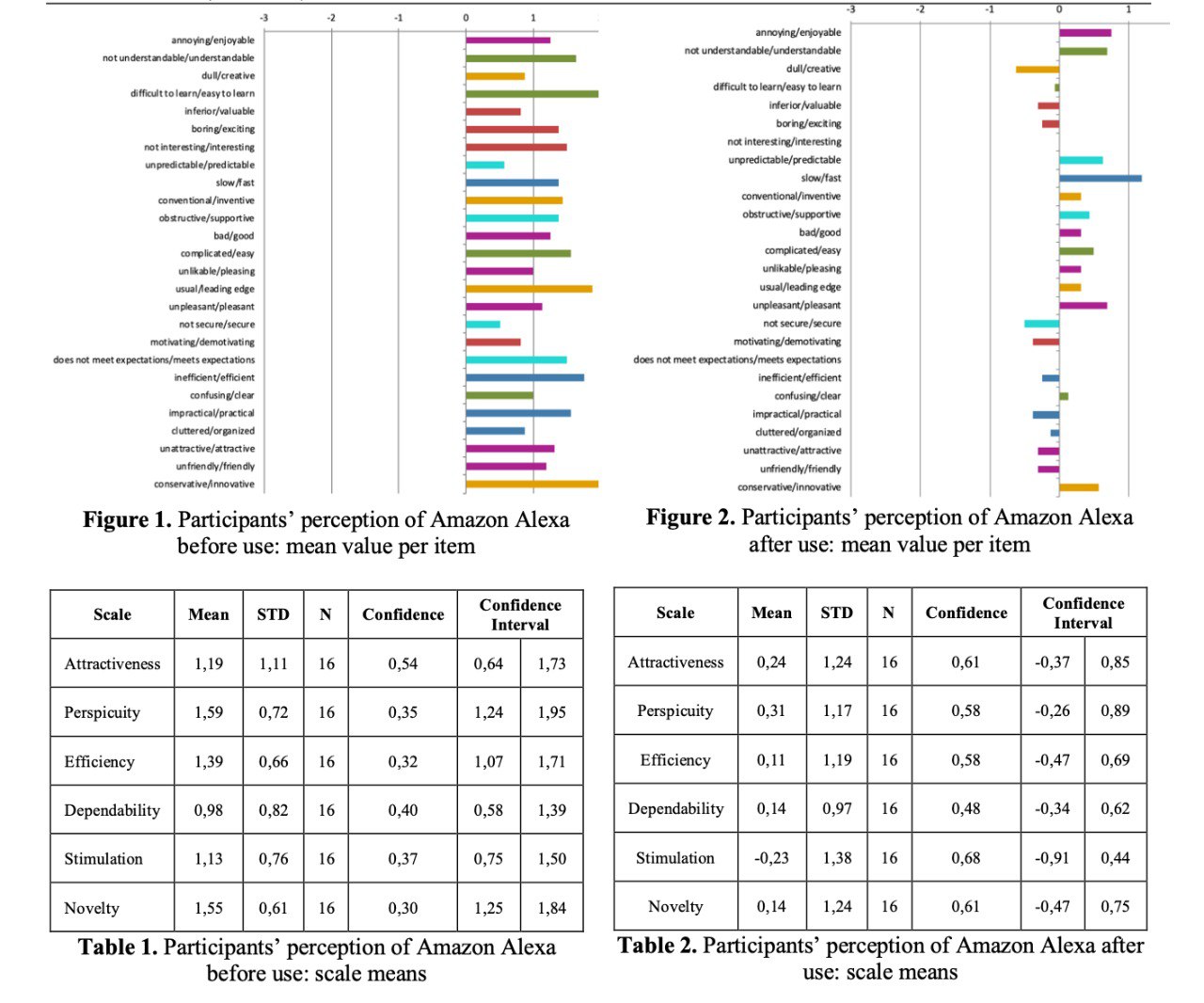

The quantitative results told a clear story. Before use, participants rated Alexa positively across all six UEQ scales — attractiveness, perspicuity, efficiency, dependability, stimulation, and novelty. After one week, every scale mean decreased significantly. The most notable drops were in stimulation (from 1.13 to −0.23) and attractiveness (from 1.19 to 0.24).

From assistant to toy. Before use, the majority described Alexa as a multi-functional assistant helpful in daily life. After one week, nearly all described it as “rather a toy” — useful mainly for music playback, jokes, and entertainment.

Assigned personality. Participants consistently assigned human-like traits to Alexa — often negative: “arrogant”, “dumb”, “uninterested”. The assigned personalities reflected both the robotic flatness of interaction and the absence of any real back-and-forth.

Likelihood of adoption reversed. After one week, 87.5% responded negatively when asked whether they would adopt a smart speaker. Reasons given were limited functionality, immobility, and security concerns.

What Caused the Change

Poor discoverability and learnability. Users could not find out what Alexa was capable of. The device consistently redirected them to the app for use-related questions, but the app did not clearly answer those questions either. By the end of the study, only 62.5% of participants knew that Alexa Skills existed.

Expectation mismatch driven by marketing. Smart speakers were marketed as intelligent, smart, and ready to help — language that set a specific mental model before any interaction. When the actual product failed to match that image, the gap produced cognitive dissonance. The product was described not as merely limited, but as somehow deceptive.

Design Recommendations

- Spoken help and documentation — users expect a voice assistant to explain itself through voice. Help should be accessible through the assistant itself, not redirected to an app.

- Just-in-time instructions — surface relevant capability hints at the moment they become useful, rather than expecting users to discover capabilities independently.

- Clearer error feedback — error responses should clearly state what went wrong and how to recover, in spoken form.

- Confirmation patterns for financial interactions — given that users process only the final part of a spoken confirmation prompt, financial actions require more robust confirmation, ideally directing users to a secondary screen for explicit approval.

Key contributions

- Mixed-methods study design — combined UEQ questionnaire (before and after), semi-structured interviews, and a one-week diary study with 16 novice German-speaking participants

- Unguided first-interaction observation — captured natural first encounters without moderator guidance

- Quantitative attitude measurement — documented a statistically significant decrease across all six UEQ scales after one week of use

- Qualitative attitude analysis — identified the causes of attitude change including expectation mismatch, personality attribution, poor discoverability, and accidental subscriptions

- Design recommendations — four actionable proposals for voice interaction designers addressing help systems, error feedback, just-in-time instructions, and financial confirmation patterns

Why this still matters

This study was conducted in 2019, when smart speakers were new enough that most users had never spoken to an AI before. The technology has moved on considerably since then. But the underlying dynamic has not.

Every time a new form of AI enters people’s lives (whether it is a voice assistant, a generative AI tool, an autonomous agent, or something that does not yet have a name) the same sequence plays out. People arrive with expectations shaped by marketing and word of mouth. They form a first impression within minutes. Something goes wrong, or works better than expected, and that moment shapes whether they stay or walk away.

What this research showed is that first impressions in AI adoption are not just emotional reactions. They are structural. They are driven by discoverability failures, ambiguous feedback, expectation mismatches built into how products are presented, and the fragility of trust when something unexpected happens. These are design problems. And they are as relevant to the AI products being built today as they were to a smart speaker in 2019.